New Scientific Discoveries in AI: Emotional Voice Control and Suicide Prevention

The New Frontier of Mental Health: AI, Emotional Voice Control, and Suicide Prevention

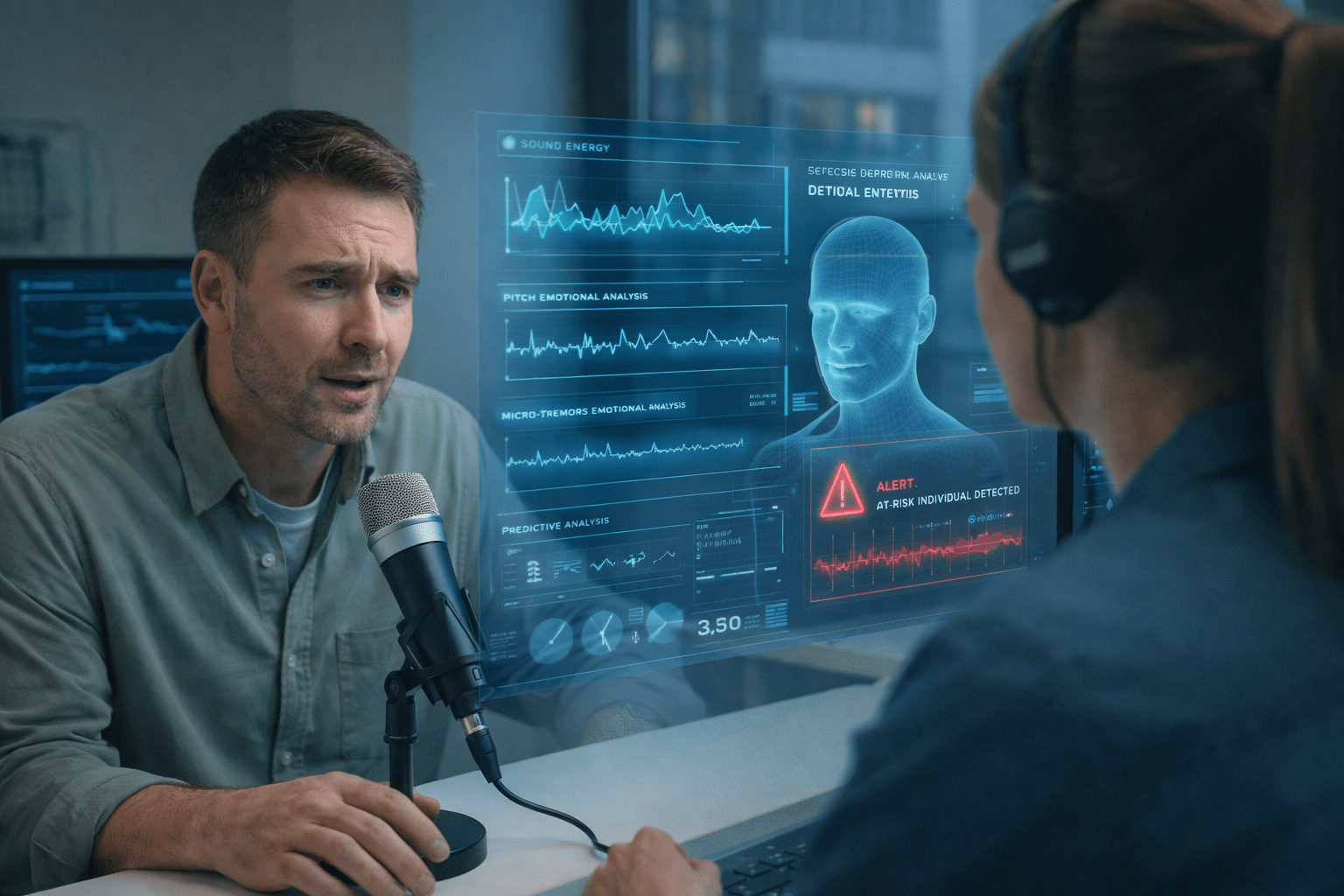

In recent years, the intersection of Artificial Intelligence and mental health has evolved from a futuristic concept into a life-saving reality. Two of the most significant breakthroughs in this field are Emotional Voice Control (EVC) and Predictive AI for Suicide Prevention. By “listening” between the lines, AI is beginning to identify cries for help that the human ear might miss.

- Beyond Words: The Science of Emotional Voice Control

Emotional Voice Control utilizes deep learning to analyze vocal biomarkers, subtle changes in pitch, rhythm, and tone that correlate with neurological states.

- Vocal Biomarkers: Research has shown that individuals suffering from severe depression or suicidal ideation often exhibit “flattened affect” or specific micro-tremors in their vocal cords.

- Real-time Analysis: New AI models can now process these nuances in real-time, distinguishing between temporary stress and a deep-seated mental health crisis.

- PredictiveModelingin Suicide Prevention

The most critical challenge in psychiatry is the “prediction gap.” Many individuals at high risk do not explicitly state their intentions. This is where AI’s pattern recognition becomes a game-changer.

- Digital Phenotyping: By analyzing patterns in speech, social media activity, and even sleep-wake cycles (captured via wearables), AI can create a “digital phenotype” of a user’s mental state.

- Identifying the “Point of No Return”: New algorithms developed by neuroscientists are now capable of identifying linguistic shifts—such as an increase in “absolute” language (using words like always, never, or completely)—which often precedes a suicide attempt.

- Ethical Safeguards and the “Human-in-the-Loop”

While the technology is impressive, the scientific community emphasizes that AI is a bridge, not a replacement, for human intervention.

“AI doesn’t replace the therapist; it acts as a 24/7 smoke detector for the soul.”

The current gold standard involves a “Human-in-the-Loop” system. When the AI detects a high-risk emotional signature through voice analysis, it doesn’t just “shut down”; it alerts crisis hotlines or emergency contacts, providing them with the data needed to intervene effectively.

- The Challenges Ahead

Despite the breakthroughs, two main hurdles remain:

- Privacy: How do we monitor vocal biomarkers without infringing on personal liberty?

- Bias: Ensuring that Emotional Voice Control understands different accents, languages, and cultural expressions of grief.

The Silent Signal: How E-Vox and AI are Transforming Suicide Prevention

In the realm of mental health, the most dangerous symptoms are often the ones we cannot see. While a broken bone shows up on an X-ray, the path to a mental health crisis is often paved with silence or masked by a “brave face.” However, new scientific discoveries in Emotional Voice Control and E-Vox (Emotional Voice Extraction) technology are changing the game, allowing AI to detect life-threatening distress before it’s too late.

- Understanding E-Vox: The Digital Ear for Emotions

E-Vox is a cutting-edge technology designed to strip away the linguistic content of speech to focus entirely on its emotional “skeleton.” While standard AI listens to vocabulary, E-Vox listens to vibration.

- Acoustic Fingerprinting: E-Vox analyzes over 2,000 different vocal features, including jitter (frequency instability), shimmer (amplitude variation), and spectral tilt. These aren’t just technical terms; they are physical manifestations of how the brain controls the vocal folds under extreme stress.

- The “Masking” Problem: Many individuals contemplating suicide become experts at “masking”—using calm words to hide internal turmoil. E-Vox bypasses this defense mechanism because it monitors autonomic nervous system responses that are nearly impossible to fake.

2. Predictive AI: Catching the Crisis Before it Peaks

The integration of E-Vox into suicide prevention protocols has led to a shift from reactive to proactive intervention.

The Linguistic Shift

Research shows that as a person moves closer to a suicidal crisis, their language changes in predictable ways. They move from “we/us” to “I/me,” and their speech becomes saturated with “Absolutist Thinking” (words like always, never, completely, or useless). AI models trained on these patterns can flag high-risk individuals with up to 80-90% accuracy, often weeks before an attempt.

Real-Time Monitoring in Crisis Lines

Imagine a crisis hotline where the operator is supported by an E-Vox co-pilot. As the caller speaks, the AI analyzes the micro-tremors in their voice. If the “Emotional Intensity Score” spikes or shows a sudden, eerie “flatness” (often associated with the calm before a suicide attempt), the system can instantly alert emergency services with a high-priority tag.

- The Science of Vocal Biomarkers

What exactly is the AI looking for? Scientists have identified specific vocal biomarkers that act as early warning systems:

- Reduced Pitch Range: A “monotone” voice is often a physiological byproduct of the cognitive load associated with severe depression.

- Hyper-Nasality: Subtle changes in the soft palate movement due to fatigue and psychological distress.

- Breathiness and Pauses: Analyzing the duration of silence between words can reveal the “cognitive slowing” typical of suicidal ideation.

- The Ethical Tightrope: Privacy vs. Protection

With great power comes a significant ethical burden. The use of E-Vox and suicide-prevention AI raises critical questions:

- Consent: Should your phone be “listening” for emotional distress at all times?

- The “False Positive” Risk: What happens if the AI misinterprets a bad day as a life-threatening crisis?

- Data Sovereignty: Who owns the emotional data extracted from your voice?

The scientific community is currently leaning toward “Privacy by Design,” where the AI processes the emotional data locally on the device and only transmits an alert if a specific “danger threshold” is crossed, ensuring the actual conversation remains private.

The synergy between E-Vox and AI is not about replacing human empathy, it’s about augmenting it. By identifying the subtle acoustic signals of despair, we can build a world where no one has to suffer in silence. We are moving toward a future where “reaching out” is no longer the sole responsibility of the victim, but a shared responsibility supported by technology.